Your AI Strategy Won’t Work Until Your Data Does

April 23, 2026

Every pharma and biotech CEO I talk to wants the same thing: AI that accelerates drug discovery. Fewer failed candidates. Faster time to clinic. Lower cost per program.

Most of them are not ready for it.

Not because the AI is not good enough. It is. The models are powerful, the agents are increasingly capable, and the toolkits are maturing fast. The problem is simpler and harder at the same time: their data is a mess.

I sat down recently with Philip Mounteney, VP of Science and Technology at Dotmatics (now part of Siemens), for the Chalk Talk podcast. Philip has spent his career at the intersection of data science and drug discovery, and his diagnosis of the industry was blunt: most companies chasing AI have yet to wrap their arms around their own data.

That should concern all of us.

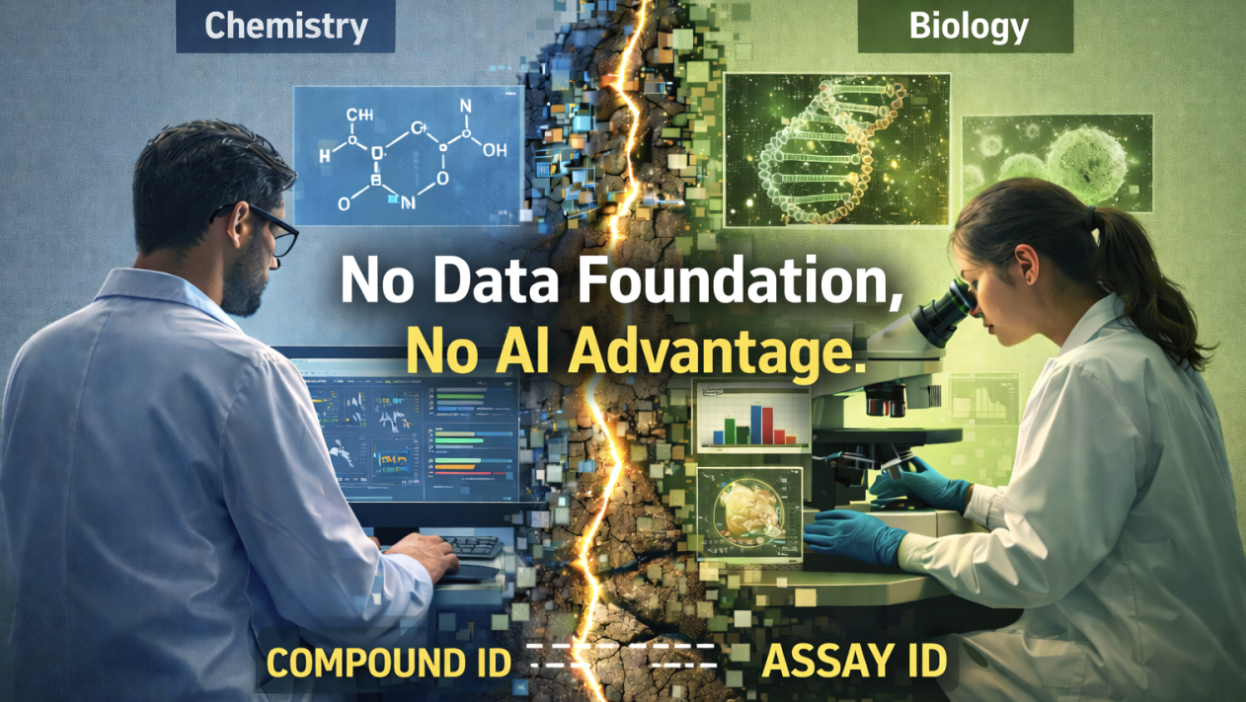

The Data Foundation Problem

Drug discovery generates an extraordinary volume and variety of data. Chemists use one system. Biologists use another. Flow cytometry, high-throughput screening, electronic lab notebooks: each tool creates its own silo with its own backend and its own vocabulary. Philip described it simply: “Chemists and biologists don’t talk the same language. They’re using different systems of record.”

This is not a new problem. It has been the central friction point in pharmaceutical R&D for two decades. What is new is the consequence of leaving it unsolved: you cannot run meaningful AI on fragmented, poorly labeled, inconsistently structured data.

Garbage in, garbage out. That phrase has never been more relevant than it is right now.

The industry talks a lot about FAIR data principles: findable, accessible, interoperable, reusable. Philip pointed out that even building a FAIR data system for a single cell in the human body is incredibly challenging, because the science itself never stops changing. New modalities, new assay types, new data formats: the target keeps moving.

What the Automotive Industry Already Figured Out

Philip drew a comparison that stuck with me. The automotive industry used to build clay models of cars to test aerodynamics. It was expensive, slow, and skill-intensive. Today, they build those models in the computer and iterate through thousands of designs before anything reaches a factory floor.

Pharma is not there yet. There is even a term for the gap: Eroom’s Law (Moore’s Law spelled backwards). While computing power doubles, pharmaceutical R&D productivity has been declining for decades. It can take up to 10 years and billions of dollars to bring a single drug to market, with the first six years often spent just identifying the right target, modality, and candidate molecule.

The promise of AI is to compress that timeline dramatically: fail faster in the computer so you waste fewer years in the wet lab. But the prerequisite is having data that AI can actually work with.

Three Signs Your Organization Is Not AI-Ready

Based on my conversation with Philip and patterns I’m seeing across the industry, here are three warning signs:

1. You treat AI as a silver bullet.

Philip was candid: some companies see AI as a fix-all for every challenge they face, and that scares him. If your AI strategy starts with the technology rather than the data it will consume, you are building on sand.

2. Your teams cannot find last year’s data.

One of the most exciting opportunities in drug discovery is repurposing: going back through historical data to find diamonds that were not relevant to the original target but could be transformative today. If your data is not stored in a FAIR platform, decades of research might as well not exist.

3. You need a data scientist to answer a simple question.

Philip shared an example of using an AI agent to run a structure-activity relationship analysis from a single natural language prompt. The agent understood the chemistry toolkit, inferred the intent, broke down the molecules, and produced SAR observations automatically. That is the kind of democratization AI should deliver. If your scientists still need a cheminformatician to run a basic correlation, your data infrastructure is the bottleneck.

The Payoff: From Agentic to Predictive

When I asked Philip what comes after agentic AI, his answer was immediate: predictive AI. Digital twins of cells and disease pathways. The ability to iterate over thousands of molecular designs in silico and predict which ones will work before touching a bench.

Siemens is already doing this in aerospace and manufacturing. At a recent Siemens conference, Philip saw demonstrations of predictive models iterating over thousands of wing designs to identify optimal configurations before production. The healthcare sector is not there yet, but Philip estimates the timeline has shrunk to five to ten years, down from what would have been well over a decade just three years ago.

The implication is enormous. Cheaper drugs. Faster timelines. Fewer side effects. And perhaps most importantly: the ability to pursue diseases that are currently not financially viable, because the cost of failure drops low enough to expand the aperture.

But none of it happens without the data.

The Bottom Line

AI is not the hard part. Data is the hard part. The companies that will lead the next era of drug discovery are not the ones with the most sophisticated models. They are the ones that invested in harmonizing their data, building ontologies, and creating the digital threads that connect research to production. Everything else follows from that.

If you are a pharma or biotech leader building your AI roadmap, start with an honest assessment: can your teams find, access, and reuse the data you already have? If the answer is no, that is where the real work begins.

Want to discuss what AI readiness looks like for your organization? Get in touch and let’s talk about how to build the data foundation that makes everything else possible.