Your AI Strategy Is Only as Strong as the Data Nobody Audited

April 23, 2026

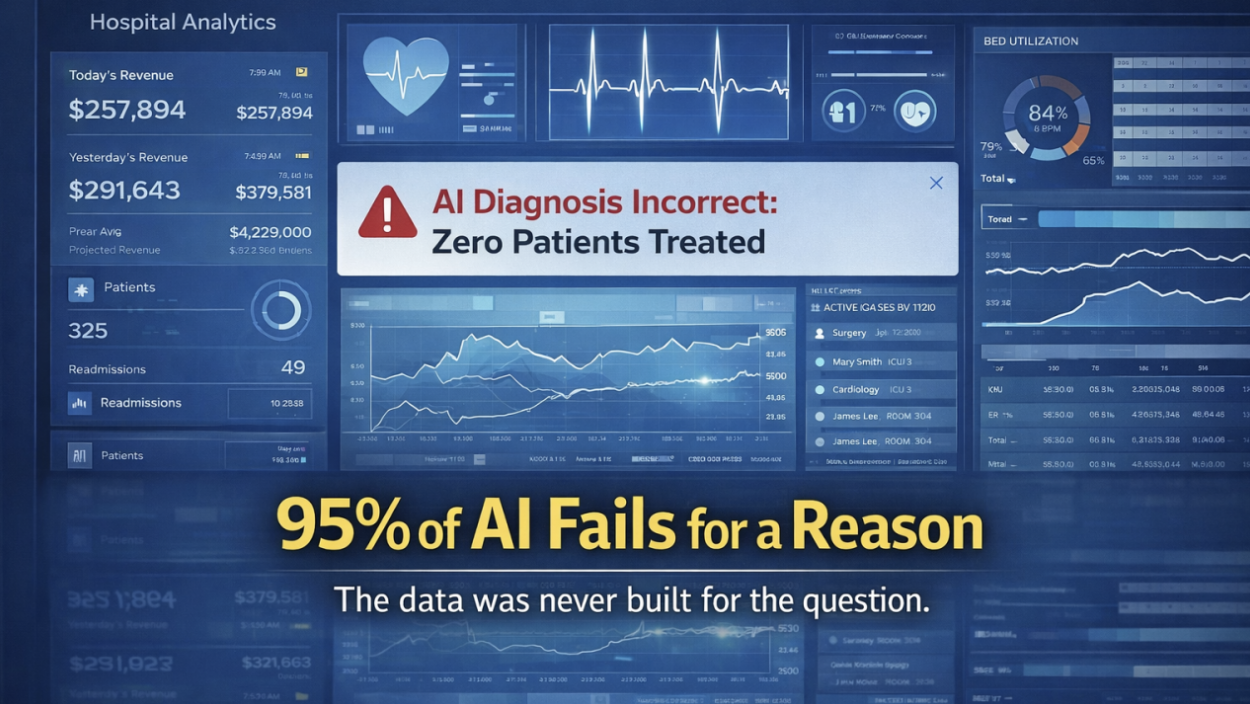

Enterprises will spend an estimated $149 billion on healthcare AI by 2030. Yet according to a 2025 MIT study, more than 95% of companies investing in AI see no measurable bottom-line impact. That is not a technology problem. It is an infrastructure problem, and most organizations are not even looking in the right place to find it.

The pattern is consistent across industries but especially acute in healthcare: organizations invest heavily in models, platforms, and talent while treating the data those systems depend on as settled infrastructure. It is not settled. In most cases, the data was never designed for the questions now being asked of it.

This is the gap that determines whether an AI investment creates lasting advantage or becomes another line item with no return.

A Capital One survey conducted by Forrester Research in 2024 found that 73% of enterprise data leaders identified data quality and completeness as the primary barrier to AI success. Not model capability. Not engineering talent. Not budget. The data itself. And in healthcare, where data complexity is orders of magnitude greater than in most industries, the barrier is correspondingly higher.

The Foundation Was Built for a Different Purpose

Healthcare data infrastructure was designed around a single imperative: getting providers paid. Claims data, billing codes, encounter records: these systems were optimized for reimbursement accuracy. Fields that do not affect payment go unchecked. Data lineage is rarely documented. Quality standards apply to the billing cycle, not to strategic analysis.

Research from Kythera Health illustrates the consequences directly. When their team pointed large language models at raw claims data and asked basic business questions, they got zero correct answers. Not approximate answers. Not directionally useful answers. Zero. The models were not defective. The data was never built for the purpose it was now being asked to serve.

But when the team built a structured abstraction layer, translating five billing claims into one clean surgery record with context, the same models performed dramatically better. The technology did not change. The foundation did.

This distinction matters because it reframes where the risk sits. The risk is not in model selection or algorithm design. The risk is in the assumption that existing data infrastructure can support new analytical demands without fundamental re-architecture.

Data Architecture Is Pack Infrastructure

In the work I do with organizations, I use a concept called the Pack: the smallest complete set of interdependent capabilities and handoffs that must move together to reliably keep a customer promise. The Pack is not the team. The team operates the Pack.

Data architecture is part of the Pack. It is not background IT. It is a capability that every other capability in the system depends on. When the data layer is misaligned with the organization’s decision-making needs, every downstream capability is constrained by that misalignment, whether the organization sees it or not.

This is where the concept of variance as signal becomes critical. In the EdgeFinder lens I work through, variance is not error. It is the most valuable intelligence available. The gap between what data appears to contain and what it actually means for the decisions being made on it is a form of Operational Variance: a deviation in how work actually flows versus how it is designed to work.

Most organizations have never tested for this variance. The data fields exist. They look populated. Dashboards render. Reports generate. But the data underneath was designed for billing adjudication, not for the strategic or clinical questions those reports now attempt to answer.

Precisely’s 2025 Data Integrity Trends Report found that 64% of organizations cite data quality as their top data integrity challenge, and 77% rate their data quality as average or worse. These numbers have not improved meaningfully despite years of investment in data governance. The problem is not awareness. It is that organizations continue to treat data architecture as an IT function rather than a strategic capability within the Pack.

The Fortify Problem: Why AI Investments Stall After Launch

There is a pattern I see repeatedly in organizations that invest in AI. It looks like this: leadership sees the opportunity. AI can transform clinical decision support, operational efficiency, revenue cycle management. The vision is clear and the case is compelling. Resources are committed. Budget is allocated, vendors are selected, pilots are launched. Early results look promising.

And then the initiative stalls. Not because the technology stopped working, but because nobody rebuilt the data infrastructure, the governance processes, or the cross-functional handoffs that the AI system now depends on to operate at scale. The organization made a bold move and then failed to secure the ground it moved across.

Think of it like a home inspection. You find a leaky lead pipe in the basement. You fix the leak. But do you also check whether the rest of the house has lead pipes? Because if you do not, the fix you just made is temporary. The same problem is waiting in the walls, and it is affecting your long-term health whether you see it or not.

In the EdgeFinder lens, this maps to a continuous loop of Find, Advance, Fortify. Find is the discipline of slowing down to examine what is actually happening: reviewing where things have drifted, where the gaps are, where the next threat or opportunity lives. Advance is doing something about it: committing resources, choosing a direction, acting on what you found. Fortify is making sure the entire organization adopts the advance so you never have to go back and do it again. It is thoroughness, speed, and closure. Fortify is what turns a local fix into an organizational standard, so the whole Pack moves forward together rather than one team solving a problem that three other teams still have.

All three are required. And the primary failure mode, the one I see most often in AI adoption, is Find and Advance without Fortify. The organization spots the leaky pipe and fixes it, but never checks the rest of the house. The pilot succeeds. The scale-up does not. And the reason is almost always the same: nobody did the Fortify work to make the gain hold across the entire organization.

The numbers confirm this pattern. A 2024 Boston Consulting Group study found that 70% of AI implementation challenges are related to people and processes, not technical issues. Gartner estimates that 85% of AI models fail due to poor data quality. A Forrester survey of 500 enterprise data leaders found that 73% identified data quality and completeness as the primary barrier to AI success.

These are not technology failures. They are Fortify failures. The organization moved forward without securing the ground it moved across.

Sensing the Variance Before It Becomes Visible Failure

The question for leaders is not whether their data quality is perfect. It never will be. The question is whether anyone in the organization is actively detecting where data infrastructure and decision-making needs have drifted apart.

This is the work of Sensing, one of five Strategic Meta Skills that install the EdgeFinder lens as organizational muscle. Sensing detects and interprets meaningful variance before it becomes visible failure. Its filter is pattern recognition: the ability to see what is actually happening versus what the system reports.

In the context of AI readiness, Sensing means asking a specific set of questions before investing further:

1. Was this data field designed for the question we are asking of it?

Most healthcare data fields were designed for billing. If you are asking clinical or strategic questions of billing data, you have found your first signal.

2. When was the last time someone tested data output against known ground truth?

Kythera’s research showed that the gap between data appearance and data reality can be total. Zero correct answers is not an edge case; it is a warning about untested assumptions.

3. Who in the organization is accountable for data architecture as a strategic capability?

If data architecture reports through IT operations rather than strategic leadership, the organization has already signaled that it treats data as infrastructure maintenance, not competitive advantage.

What This Means for Leaders Investing in AI

The organizations that will extract durable value from AI are not the ones with the best models or the largest budgets. They are the ones that treat data architecture as a core capability within the Pack, subject to the same strategic scrutiny as sales execution, product development, or customer experience.

This is not a niche concern for data teams. It is the central strategic question for any organization deploying AI at scale.

This requires a shift in where leadership attention goes. Only 16% of healthcare organizations have system-wide AI governance frameworks, according to recent research. The investment is flowing into models and platforms. The governance, architecture, and Fortify work that makes those investments hold is still, in most organizations, an afterthought.

The variance between what organizations spend on AI and the returns they realize is not a mystery. It is a signal. And the organizations that learn to read that signal, to detect where their data infrastructure and their decision-making needs have quietly separated, will be the ones that convert AI from a cost center into sustained competitive advantage.

The Bottom Line

AI readiness is not about model selection. It is about whether the data your organization depends on was designed for the questions you are now asking of it. Before the next AI investment, audit the foundation. Test one critical data field against its actual purpose. If the answer is that it was built for billing, not for the decision it now informs, you have found the variance that will determine whether your AI strategy creates lasting value or joins the 95% that do not.

If this pattern sounds familiar in your organization, I would welcome the conversation. Get in touch and let’s talk about where your data infrastructure and your strategic ambitions may have quietly drifted apart.